This is the BuildBot documentation for Buildbot version 0.8.7p1.

If you are evaluating Buildbot and would like to get started quickly, start with the Tutorial. Regular users of Buildbot should consult the Manual, and those wishing to modify Buildbot directly will want to be familiar with the Developer's Documentation.

Table Of Contents¶

Buildbot Tutorial¶

Contents:

First Run¶

Goal¶

This tutorial will take you from zero to running your first buildbot master and slave as quickly as possible, without changing the default configuration.

This tutorial is all about instant gratification and the five minute experience: in five minutes we want to convince you that this project Works, and that you should seriously consider spending some more time learning the system. In this tutorial no configuration or code changes are done.

This tutorial assumes that you are running on Unix, but might be adaptable easily to Windows.

For the quickest way through, you should be able to cut and paste each shell block from this tutorial directly into a terminal.

Getting the code¶

There are many ways to get the code on your machine. For this tutorial, we will use easy_install to install and run buildbot. While this isn't the preferred method to install buildbot, it is the simplest one to use for the purposes of this tutorial because it should work on all systems. (The preferred method would be to install buildbot from packages of your distribution.)

- To make this work, you will need the following installed:

- python and the development packages for it

- virtualenv

- git

Preferably, use your package installer to install these.

You will also need a working Internet connection, as virtualenv and easy_install will need to download other projects from the Internet.

Note

Buildbot does not require root access. Run the commands in this tutorial as a normal, unprivileged user.

Let's dive in by typing at the terminal:

cd

mkdir -p tmp/buildbot

cd tmp/buildbot

virtualenv --no-site-packages sandbox

source sandbox/bin/activate

easy_install buildbot

Creating a master¶

At the terminal, type:

buildbot create-master master

mv master/master.cfg.sample master/master.cfg

Now start it:

buildbot start master

tail -f master/twistd.log

You will now see all of the log information from the master in this terminal. You should see lines like this:

2011-12-04 10:04:40-0600 [-] Starting factory <buildbot.status.web.baseweb.RotateLogSite instance at 0x2e36638>

2011-12-04 10:04:40-0600 [-] Setting up http.log rotating 10 files of 10000000 bytes each

2011-12-04 10:04:40-0600 [-] WebStatus using (/home/dustin/tmp/buildbot/master/public_html)

2011-12-04 10:04:40-0600 [-] removing 0 old schedulers, updating 0, and adding 1

2011-12-04 10:04:40-0600 [-] adding 1 new changesources, removing 0

2011-12-04 10:04:40-0600 [-] gitpoller: using workdir '/home/dustin/tmp/buildbot/master/gitpoller-workdir'

2011-12-04 10:04:40-0600 [-] gitpoller: initializing working dir from git://github.com/buildbot/pyflakes.git

2011-12-04 10:04:40-0600 [-] configuration update complete

2011-12-04 10:04:41-0600 [-] gitpoller: checking out master

2011-12-04 10:04:41-0600 [-] gitpoller: finished initializing working dir from git://github.com/buildbot/pyflakes.git at rev 1a4af6ec1dbb724b884ea14f439b272f30439e4d

Creating a slave¶

Open a new terminal, and first enter the same sandbox you created before:

cd

cd tmp/buildbot

source sandbox/bin/activate

Install buildslave command:

easy_install buildbot-slave

Now, create the slave:

buildslave create-slave slave localhost:9989 example-slave pass

The user:host pair, username, and password should be the same as the ones in master.cfg; verify this is the case by looking at the section for c['slaves'] and c['slavePortnum']:

cat master/master.cfg

Now, start the slave:

buildslave start slave

Check the slave's log:

tail -f slave/twistd.log

You should see lines like the following at the end of the worker log:

2009-07-29 20:59:18+0200 [Broker,client] message from master: attached

2009-07-29 20:59:18+0200 [Broker,client] SlaveBuilder.remote_print(buildbot-full): message from master: attached

2009-07-29 20:59:18+0200 [Broker,client] sending application-level keepalives every 600 seconds

Meanwhile, in the other terminal, in the master log, if you tail the log you should see lines like this:

2011-03-13 18:46:58-0700 [Broker,1,127.0.0.1] slave 'example-slave' attaching from IPv4Address(TCP, '127.0.0.1', 41306)

2011-03-13 18:46:58-0700 [Broker,1,127.0.0.1] Got slaveinfo from 'example-slave'

2011-03-13 18:46:58-0700 [Broker,1,127.0.0.1] bot attached

2011-03-13 18:46:58-0700 [Broker,1,127.0.0.1] Buildslave example-slave attached to runtests

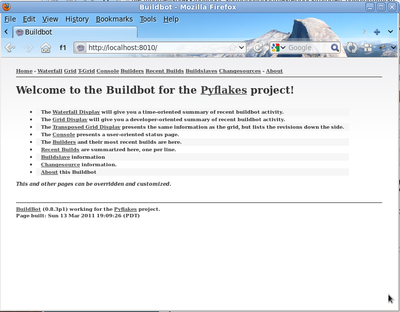

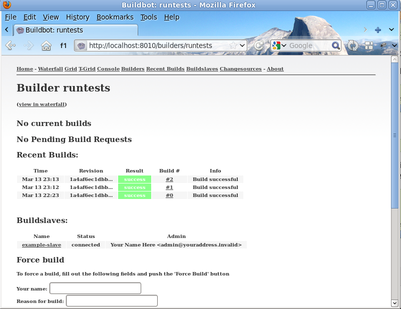

You should now be able to go to http://localhost:8010, where you will see a web page similar to:

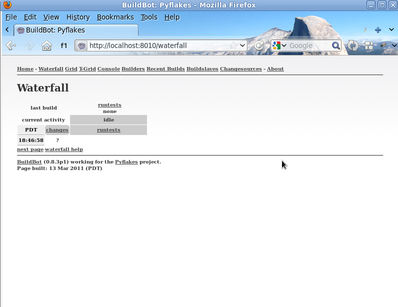

Click on the Waterfall Display link and you get this:

That's the end of the first tutorial. A bit underwhelming, you say? Well, that was the point! We just wanted to get you to dip your toes in the water. It's easy to take your first steps, but this is about as far as we can go without touching the configuration.

You've got a taste now, but you're probably curious for more. Let's step it up a little in the second tutorial by changing the configuration and doing an actual build. Continue on to A Quick Tour

A Quick Tour¶

Goal¶

This tutorial will expand on the First Run tutorial by taking a quick tour around some of the features of buildbot that are hinted at in the comments in the sample configuration. We will simply change parts of the default configuration and explain the activated features.

As a part of this tutorial, we will make buildbot do a few actual builds.

- This section will teach you how to:

- make simple configuration changes and activate them

- deal with configuration errors

- force builds

- enable and control the IRC bot

- enable ssh debugging

- add a 'try' scheduler

Setting Project Name and URL¶

Let's start simple by looking at where you would customize the buildbot's project name and URL.

We continue where we left off in the First Run tutorial.

Open a new terminal, and first enter the same sandbox you created before (where $EDITOR is your editor of choice like vim, gedit, or emacs):

cd

cd tmp/buildbot

source sandbox/bin/activate

$EDITOR master/master.cfg

Now, look for the section marked PROJECT IDENTITY which reads:

####### PROJECT IDENTITY

# the 'title' string will appear at the top of this buildbot

# installation's html.WebStatus home page (linked to the

# 'titleURL') and is embedded in the title of the waterfall HTML page.

c['title'] = "Pyflakes"

c['titleURL'] = "http://divmod.org/trac/wiki/DivmodPyflakes"

If you want, you can change either of these links to anything you want to see what happens when you change them.

After making a change go into the terminal and type:

buildbot reconfig master

You will see a handful of lines of output from the master log, much like this:

2011-12-04 10:11:09-0600 [-] loading configuration from /home/dustin/tmp/buildbot/master/master.cfg

2011-12-04 10:11:09-0600 [-] configuration update started

2011-12-04 10:11:09-0600 [-] builder runtests is unchanged

2011-12-04 10:11:09-0600 [-] removing IStatusReceiver <WebStatus on port tcp:8010 at 0x2aee368>

2011-12-04 10:11:09-0600 [-] (TCP Port 8010 Closed)

2011-12-04 10:11:09-0600 [-] Stopping factory <buildbot.status.web.baseweb.RotateLogSite instance at 0x2e36638>

2011-12-04 10:11:09-0600 [-] adding IStatusReceiver <WebStatus on port tcp:8010 at 0x2c2d950>

2011-12-04 10:11:09-0600 [-] RotateLogSite starting on 8010

2011-12-04 10:11:09-0600 [-] Starting factory <buildbot.status.web.baseweb.RotateLogSite instance at 0x2e36e18>

2011-12-04 10:11:09-0600 [-] Setting up http.log rotating 10 files of 10000000 bytes each

2011-12-04 10:11:09-0600 [-] WebStatus using (/home/dustin/tmp/buildbot/master/public_html)

2011-12-04 10:11:09-0600 [-] removing 0 old schedulers, updating 0, and adding 0

2011-12-04 10:11:09-0600 [-] adding 1 new changesources, removing 1

2011-12-04 10:11:09-0600 [-] gitpoller: using workdir '/home/dustin/tmp/buildbot/master/gitpoller-workdir'

2011-12-04 10:11:09-0600 [-] GitPoller repository already exists

2011-12-04 10:11:09-0600 [-] configuration update complete

Reconfiguration appears to have completed successfully.

The important lines are the ones telling you that it is loading the new configuration at the top, and the one at the bottom saying that the update is complete.

Now, if you go back to the waterfall page, you will see that the project's name is whatever you may have changed it to and when you click on the URL of the project name at the bottom of the page it should take you to the link you put in the configuration.

Configuration Errors¶

It is very common to make a mistake when configuring buildbot, so you might as well see now what happens in that case and what you can do to fix the error.

Open up the config again and introduce a syntax error by removing the first single quote in the two lines you changed, so they read:

c[title'] = "Pyflakes"

c['titleURL'] = "http://divmod.org/trac/wiki/DivmodPyflakes"

This creates a Python SyntaxError. Now go ahead and reconfig the buildmaster:

buildbot reconfig master

This time, the output looks like:

2011-12-04 10:12:28-0600 [-] loading configuration from /home/dustin/tmp/buildbot/master/master.cfg

2011-12-04 10:12:28-0600 [-] configuration update started

2011-12-04 10:12:28-0600 [-] error while parsing config file

2011-12-04 10:12:28-0600 [-] Unhandled Error

Traceback (most recent call last):

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/buildbot-0.8.5-py2.7.egg/buildbot/master.py", line 197, in loadTheConfigFile

d = self.loadConfig(f)

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/buildbot-0.8.5-py2.7.egg/buildbot/master.py", line 579, in loadConfig

d.addCallback(do_load)

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/Twisted-11.1.0-py2.7-linux-x86_64.egg/twisted/internet/defer.py", line 298, in addCallback

callbackKeywords=kw)

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/Twisted-11.1.0-py2.7-linux-x86_64.egg/twisted/internet/defer.py", line 287, in addCallbacks

self._runCallbacks()

--- <exception caught here> ---

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/Twisted-11.1.0-py2.7-linux-x86_64.egg/twisted/internet/defer.py", line 545, in _runCallbacks

current.result = callback(current.result, *args, **kw)

File "/home/dustin/tmp/buildbot/sandbox/lib/python2.7/site-packages/buildbot-0.8.5-py2.7.egg/buildbot/master.py", line 226, in do_load

exec f in localDict

exceptions.SyntaxError: EOL while scanning string literal (master.cfg, line 17)

Never saw reconfiguration finish.

This time, it's clear that there was a mistake. in the configuration. Luckily, the buildbot master will ignore the wrong configuration and keep running with the previous configuration.

The message is clear enough, so open the configuration again, fix the error, and reconfig the master.

Your First Build¶

By now you're probably thinking: "All this time spent and still not done a single build ? What was the name of this project again ?"

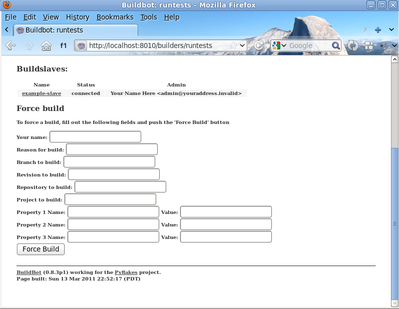

On the waterfall. page, click on the runtests link. You'll see a builder page, and in the upper-right corner is a box where you can login. The default username and password are both "pyflakes". Once you've logged in, you will see some new options that allow you to force a build:

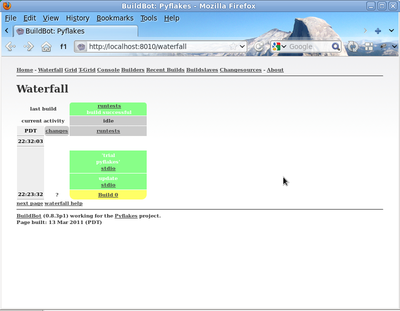

Click Force Build - there's no need to fill in any of the fields in this case. Next, click on view in waterfall.

You will now see:

Enabling the IRC Bot¶

Buildbot includes an IRC bot that you can tell to join a channel and control to report on the status of buildbot.

First, start an IRC client of your choice, connect to irc.freenode.org and join an empty channel. In this example we will use #buildbot-test, so go join that channel. (Note: please do not join the main buildbot channel!)

Edit the config and look for the STATUS TARGETS section. Enter these lines below the WebStatus line in master.cfg:

c['status'].append(html.WebStatus(http_port=8010, authz=authz_cfg))

from buildbot.status import words

c['status'].append(words.IRC(host="irc.freenode.org", nick="bbtest",

channels=["#buildbot-test"]))

Reconfigure the build master then do:

cat master/twistd.log | grep IRC

The log output should contain a line like this:

2009-08-01 15:35:20+0200 [-] adding IStatusReceiver <buildbot.status.words.IRC instance at 0x300d290>

You should see the bot now joining in your IRC client. In your IRC channel, type:

bbtest: commands

to get a list of the commands the bot supports.

Let's tell the bot to notify certain events, to learn which EVENTS we can notify on:

bbtest: help notify

Now let's set some event notifications:

bbtest: notify on started

bbtest: notify on finished

bbtest: notify on failure

The bot should have responded to each of the commands:

<@lsblakk> bbtest: notify on started

<bbtest> The following events are being notified: ['started']

<@lsblakk> bbtest: notify on finished

<bbtest> The following events are being notified: ['started', 'finished']

<@lsblakk> bbtest: notify on failure

<bbtest> The following events are being notified: ['started', 'failure', 'finished']

Now, go back to the web interface and force another build.

Notice how the bot tells you about the start and finish of this build:

< bbtest> build #1 of runtests started, including []

< bbtest> build #1 of runtests is complete: Success [build successful] Build details are at http://localhost:8010/builders/runtests/builds/1

You can also use the bot to force a build:

bbtest: force build runtests test build

But to allow this, you'll need to have allowForce in the IRC configuration:

c['status'].append(words.IRC(host="irc.freenode.org", nick="bbtest",

allowForce=True,

channels=["#buildbot-test"]))

This time, the bot is giving you more output, as it's specifically responding to your direct request to force a build, and explicitly tells you when the build finishes:

<@lsblakk> bbtest: force build runtests test build

< bbtest> build #2 of runtests started, including []

< bbtest> build forced [ETA 0 seconds]

< bbtest> I'll give a shout when the build finishes

< bbtest> build #2 of runtests is complete: Success [build successful] Build details are at http://localhost:8010/builders/runtests/builds/2

You can also see the new builds in the web interface.

Setting Authorized Web Users¶

Further down, look for the WebStatus configuration:

c['status'] = []

from buildbot.status import html

from buildbot.status.web import authz, auth

authz_cfg=authz.Authz(

# change any of these to True to enable; see the manual for more

# options

auth=auth.BasicAuth([("pyflakes","pyflakes")]),

gracefulShutdown = False,

forceBuild = 'auth', # use this to test your slave once it is set up

forceAllBuilds = False,

pingBuilder = False,

stopBuild = False,

stopAllBuilds = False,

cancelPendingBuild = False,

)

c['status'].append(html.WebStatus(http_port=8010, authz=authz_cfg))

The auth.BasicAuth() define authorized users and their passwords. You can change these or add new ones. See WebStatus for more about the WebStatus configuration.

Debugging with Manhole¶

You can do some debugging by using manhole, an interactive Python shell. It exposes full access to the buildmaster's account (including the ability to modify and delete files), so it should not be enabled with a weak or easily guessable password.

To use this you will need to install an additional package or two to your virtualenv:

cd

cd tmp/buildbot

source sandbox/bin/activate

easy_install pycrypto

easy_install pyasn1

In your master.cfg find:

c = BuildmasterConfig = {}

Insert the following to enable debugging mode with manhole:

####### DEBUGGING

from buildbot import manhole

c['manhole'] = manhole.PasswordManhole("tcp:1234:interface=127.0.0.1","admin","passwd")

After restarting the master, you can ssh into the master and get an interactive python shell:

ssh -p1234 admin@127.0.0.1

# enter passwd at prompt

Note

The pyasn1-0.1.1 release has a bug which results in an exception similar to this on startup:

exceptions.TypeError: argument 2 must be long, not int

If you see this, the temporary solution is to install the previous version of pyasn1:

pip install pyasn1-0.0.13b

If you wanted to check which slaves are connected and what builders those slaves are assigned to you could do:

>>> master.botmaster.slaves

{'example-slave': <BuildSlave 'example-slave', current builders: runtests>}

Objects can be explored in more depth using dir(x) or the helper function show(x).

Adding a 'try' scheduler¶

Buildbot includes a way for developers to submit patches for testing without committing them to the source code control system. (This is really handy for projects that support several operating systems or architectures.)

To set this up, add the following lines to master.cfg:

from buildbot.scheduler import Try_Userpass

c['schedulers'].append(Try_Userpass(

name='try',

builderNames=['runtests'],

port=5555,

userpass=[('sampleuser','samplepass')]))

Then you can submit changes using the try command.

Let's try this out by making a one-line change to pyflakes, say, to make it trace the tree by default:

git clone git://github.com/buildbot/pyflakes.git pyflakes-git

cd pyflakes-git/pyflakes

$EDITOR checker.py

# change "traceTree = False" on line 185 to "traceTree = True"

Then run buildbot's try command as follows:

source ~/tmp/buildbot/sandbox/bin/activate

buildbot try --connect=pb --master=127.0.0.1:5555 --username=sampleuser --passwd=samplepass --vc=git

This will do "git diff" for you and send the resulting patch to the server for build and test against the latest sources from git.

Now go back to the waterfall page, click on the runtests link, and scroll down. You should see that another build has been started with your change (and stdout for the tests should be chock-full of parse trees as a result). The "Reason" for the job will be listed as "'try' job", and the blamelist will be empty.

To make yourself show up as the author of the change, use the --who=emailaddr option on 'buildbot try' to pass your email address.

To make a description of the change show up, use the --properties=comment="this is a comment" option on 'buildbot try'.

To use ssh instead of a private username/password database, see Try_Jobdir.

This is the BuildBot manual for Buildbot version 0.8.7p1.

Buildbot Manual¶

Introduction¶

BuildBot is a system to automate the compile/test cycle required by most software projects to validate code changes. By automatically rebuilding and testing the tree each time something has changed, build problems are pinpointed quickly, before other developers are inconvenienced by the failure. The guilty developer can be identified and harassed without human intervention. By running the builds on a variety of platforms, developers who do not have the facilities to test their changes everywhere before checkin will at least know shortly afterwards whether they have broken the build or not. Warning counts, lint checks, image size, compile time, and other build parameters can be tracked over time, are more visible, and are therefore easier to improve.

The overall goal is to reduce tree breakage and provide a platform to run tests or code-quality checks that are too annoying or pedantic for any human to waste their time with. Developers get immediate (and potentially public) feedback about their changes, encouraging them to be more careful about testing before checkin.

Features:

- run builds on a variety of slave platforms

- arbitrary build process: handles projects using C, Python, whatever

- minimal host requirements: python and Twisted

- slaves can be behind a firewall if they can still do checkout

- status delivery through web page, email, IRC, other protocols

- track builds in progress, provide estimated completion time

- flexible configuration by subclassing generic build process classes

- debug tools to force a new build, submit fake Changes, query slave status

- released under the GPL

History and Philosophy¶

The Buildbot was inspired by a similar project built for a development team writing a cross-platform embedded system. The various components of the project were supposed to compile and run on several flavors of unix (linux, solaris, BSD), but individual developers had their own preferences and tended to stick to a single platform. From time to time, incompatibilities would sneak in (some unix platforms want to use string.h, some prefer strings.h), and then the tree would compile for some developers but not others. The buildbot was written to automate the human process of walking into the office, updating a tree, compiling (and discovering the breakage), finding the developer at fault, and complaining to them about the problem they had introduced. With multiple platforms it was difficult for developers to do the right thing (compile their potential change on all platforms); the buildbot offered a way to help.

Another problem was when programmers would change the behavior of a library without warning its users, or change internal aspects that other code was (unfortunately) depending upon. Adding unit tests to the codebase helps here: if an application's unit tests pass despite changes in the libraries it uses, you can have more confidence that the library changes haven't broken anything. Many developers complained that the unit tests were inconvenient or took too long to run: having the buildbot run them reduces the developer's workload to a minimum.

In general, having more visibility into the project is always good, and automation makes it easier for developers to do the right thing. When everyone can see the status of the project, developers are encouraged to keep the tree in good working order. Unit tests that aren't run on a regular basis tend to suffer from bitrot just like code does: exercising them on a regular basis helps to keep them functioning and useful.

The current version of the Buildbot is additionally targeted at distributed free-software projects, where resources and platforms are only available when provided by interested volunteers. The buildslaves are designed to require an absolute minimum of configuration, reducing the effort a potential volunteer needs to expend to be able to contribute a new test environment to the project. The goal is for anyone who wishes that a given project would run on their favorite platform should be able to offer that project a buildslave, running on that platform, where they can verify that their portability code works, and keeps working.

System Architecture¶

The Buildbot consists of a single buildmaster and one or more buildslaves, connected in a star topology. The buildmaster makes all decisions about what, when, and how to build. It sends commands to be run on the build slaves, which simply execute the commands and return the results. (certain steps involve more local decision making, where the overhead of sending a lot of commands back and forth would be inappropriate, but in general the buildmaster is responsible for everything).

The buildmaster is usually fed Changes by some sort of version control system (Change Sources), which may cause builds to be run. As the builds are performed, various status messages are produced, which are then sent to any registered Status Targets.

The buildmaster is configured and maintained by the buildmaster admin, who is generally the project team member responsible for build process issues. Each buildslave is maintained by a buildslave admin, who do not need to be quite as involved. Generally slaves are run by anyone who has an interest in seeing the project work well on their favorite platform.

BuildSlave Connections¶

The buildslaves are typically run on a variety of separate machines, at least one per platform of interest. These machines connect to the buildmaster over a TCP connection to a publically-visible port. As a result, the buildslaves can live behind a NAT box or similar firewalls, as long as they can get to buildmaster. The TCP connections are initiated by the buildslave and accepted by the buildmaster, but commands and results travel both ways within this connection. The buildmaster is always in charge, so all commands travel exclusively from the buildmaster to the buildslave.

To perform builds, the buildslaves must typically obtain source code from a CVS/SVN/etc repository. Therefore they must also be able to reach the repository. The buildmaster provides instructions for performing builds, but does not provide the source code itself.

Buildmaster Architecture¶

The buildmaster consists of several pieces:

- Change Sources

- Which create a Change object each time something is modified in the VC repository. Most ChangeSources listen for messages from a hook script of some sort. Some sources actively poll the repository on a regular basis. All Changes are fed to the Schedulers.

- Schedulers

- Which decide when builds should be performed. They collect Changes into BuildRequests, which are then queued for delivery to Builders until a buildslave is available.

- Builders

- Which control exactly how each build is performed (with a series of BuildSteps, configured in a BuildFactory). Each Build is run on a single buildslave.

- Status plugins

- Which deliver information about the build results through protocols like HTTP, mail, and IRC.

Each Builder is configured with a list of BuildSlaves that it will use for its builds. These buildslaves are expected to behave identically: the only reason to use multiple BuildSlaves for a single Builder is to provide a measure of load-balancing.

Within a single BuildSlave, each Builder creates its own SlaveBuilder instance. These SlaveBuilders operate independently from each other. Each gets its own base directory to work in. It is quite common to have many Builders sharing the same buildslave. For example, there might be two buildslaves: one for i386, and a second for PowerPC. There may then be a pair of Builders that do a full compile/test run, one for each architecture, and a lone Builder that creates snapshot source tarballs if the full builders complete successfully. The full builders would each run on a single buildslave, whereas the tarball creation step might run on either buildslave (since the platform doesn't matter when creating source tarballs). In this case, the mapping would look like:

Builder(full-i386) -> BuildSlaves(slave-i386)

Builder(full-ppc) -> BuildSlaves(slave-ppc)

Builder(source-tarball) -> BuildSlaves(slave-i386, slave-ppc)

and each BuildSlave would have two SlaveBuilders inside it, one for a full builder, and a second for the source-tarball builder.

Once a SlaveBuilder is available, the Builder pulls one or more BuildRequests off its incoming queue. (It may pull more than one if it determines that it can merge the requests together; for example, there may be multiple requests to build the current HEAD revision). These requests are merged into a single Build instance, which includes the SourceStamp that describes what exact version of the source code should be used for the build. The Build is then randomly assigned to a free SlaveBuilder and the build begins.

The behaviour when BuildRequests are merged can be customized, Merging Build Requests.

Status Delivery Architecture¶

The buildmaster maintains a central Status object, to which various status plugins are connected. Through this Status object, a full hierarchy of build status objects can be obtained.

The configuration file controls which status plugins are active. Each status plugin gets a reference to the top-level Status object. From there they can request information on each Builder, Build, Step, and LogFile. This query-on-demand interface is used by the html.Waterfall plugin to create the main status page each time a web browser hits the main URL.

The status plugins can also subscribe to hear about new Builds as they occur: this is used by the MailNotifier to create new email messages for each recently-completed Build.

The Status object records the status of old builds on disk in the buildmaster's base directory. This allows it to return information about historical builds.

There are also status objects that correspond to Schedulers and BuildSlaves. These allow status plugins to report information about upcoming builds, and the online/offline status of each buildslave.

Control Flow¶

A day in the life of the buildbot:

- A developer commits some source code changes to the repository. A hook script or commit trigger of some sort sends information about this change to the buildmaster through one of its configured Change Sources. This notification might arrive via email, or over a network connection (either initiated by the buildmaster as it subscribes to changes, or by the commit trigger as it pushes Changes towards the buildmaster). The Change contains information about who made the change, what files were modified, which revision contains the change, and any checkin comments.

- The buildmaster distributes this change to all of its configured Schedulers. Any important changes cause the tree-stable-timer to be started, and the Change is added to a list of those that will go into a new Build. When the timer expires, a Build is started on each of a set of configured Builders, all compiling/testing the same source code. Unless configured otherwise, all Builds run in parallel on the various buildslaves.

- The Build consists of a series of Steps. Each Step causes some number of commands to be invoked on the remote buildslave associated with that Builder. The first step is almost always to perform a checkout of the appropriate revision from the same VC system that produced the Change. The rest generally perform a compile and run unit tests. As each Step runs, the buildslave reports back command output and return status to the buildmaster.

- As the Build runs, status messages like "Build Started", "Step Started", "Build Finished", etc, are published to a collection of Status Targets. One of these targets is usually the HTML Waterfall display, which shows a chronological list of events, and summarizes the results of the most recent build at the top of each column. Developers can periodically check this page to see how their changes have fared. If they see red, they know that they've made a mistake and need to fix it. If they see green, they know that they've done their duty and don't need to worry about their change breaking anything.

- If a MailNotifier status target is active, the completion of a build will cause email to be sent to any developers whose Changes were incorporated into this Build. The MailNotifier can be configured to only send mail upon failing builds, or for builds which have just transitioned from passing to failing. Other status targets can provide similar real-time notification via different communication channels, like IRC.

Installation¶

Buildbot Components¶

Buildbot is shipped in two components: the buildmaster (called buildbot for legacy reasons) and the buildslave. The buildslave component has far fewer requirements, and is more broadly compatible than the buildmaster. You will need to carefully pick the environment in which to run your buildmaster, but the buildslave should be able to run just about anywhere.

It is possible to install the buildmaster and buildslave on the same system, although for anything but the smallest installation this arrangement will not be very efficient.

Requirements¶

Common Requirements¶

At a bare minimum, you'll need the following for both the buildmaster and a buildslave:

Python: http://www.python.org

Buildbot requires python-2.5 or later on the master, although Python-2.7 is recommended. The slave run on Python-2.4.

Twisted: http://twistedmatrix.com

Buildbot requires Twisted-9.0.0 or later on the master, and Twisted-8.1.0 on the slave. As always, the most recent version is recommended.

In some cases, Twisted is delivered as a collection of subpackages. You'll need at least "Twisted" (the core package), and you'll also want TwistedMail, TwistedWeb, and TwistedWords (for sending email, serving a web status page, and delivering build status via IRC, respectively). You might also want TwistedConch (for the encrypted Manhole debug port). Note that Twisted requires ZopeInterface to be installed as well.

Of course, your project's build process will impose additional requirements on the buildslaves. These hosts must have all the tools necessary to compile and test your project's source code.

Windows Support¶

Buildbot - both master and slave - runs well natively on Windows. The slave runs well on Cygwin, but because of problems with SQLite on Cygwin, the master does not.

Buildbot's windows testing is limited to the most recent Twisted and Python versions. For best results, use the most recent available versions of these libraries on Windows.

Pywin32: http://sourceforge.net/projects/pywin32/

Twisted requires PyWin32 in order to spawn processes on Windows.

Buildmaster Requirements¶

sqlite3: http://www.sqlite.org

Buildbot requires SQLite to store its state. Version 3.7.0 or higher is recommended, although Buildbot will run against earlier versions -- at the risk of "Database is locked" errors. The minimum version is 3.4.0, below which parallel database queries and schema introspection fail.

pysqlite: http://pypi.python.org/pypi/pysqlite

The SQLite Python package is required for python-2.5 and earlier (it is already included in python-2.5 and later, but the version in python-2.5 has nasty bugs)

simplejson: http://pypi.python.org/pypi/simplejson

The simplejson package is required for python-2.5 and earlier (it is already included as json in python-2.6 and later)

Jinja2: http://jinja.pocoo.org/

Buildbot requires Jinja version 2.1 or higher.

Jinja2 is a general purpose templating language and is used by Buildbot to generate the HTML output.

SQLAlchemy: http://www.sqlalchemy.org/

Buildbot requires SQLAlchemy 0.6.0 or higher. SQLAlchemy allows Buildbot to build database schemas and queries for a wide variety of database systems.

SQLAlchemy-Migrate: http://code.google.com/p/sqlalchemy-migrate/

Buildbot requires one of the following SQLAlchemy-Migrate versions: 0.6.1, 0.7.0, and 0.7.1. Sadly, Migrate's inter-version compatibility is not good, so other versions - newer or older - are unlikely to work correctly. Buildbot uses SQLAlchemy-Migrate to manage schema upgrades from version to version.

Python-Dateutil: http://labix.org/python-dateutil

The Nightly scheduler requires Python-Dateutil version 1.5 (the last version to support Python-2.x). This is a small, pure-python library. Buildbot will function properly without it if the Nightlys scheduler is not used.

Installing the code¶

The Distribution Package¶

Buildbot comes in two parts: buildbot (the master) and buildbot-slave (the slave). The two can be installed individually or together.

Installation From PyPI¶

The easiest way to install Buildbot is using 'pip'. For the master:

pip install buildbot

and for the slave:

pip install buildbot-slave

Installation From Tarballs¶

Buildbot and Buildslave are installed using the standard python distutils process. For either component, after unpacking the tarball, the process is:

python setup.py build

python setup.py install

where the install step may need to be done as root. This will put the bulk of the code in somewhere like /usr/lib/pythonx.y/site-packages/buildbot. It will also install the buildbot command-line tool in /usr/bin/buildbot.

If the environment variable $NO_INSTALL_REQS is set to 1, then setup.py will not try to install Buildbot's requirements. This is usually only useful when building a Buildbot package.

To test this, shift to a different directory (like /tmp), and run:

buildbot --version

# or

buildslave --version

If it shows you the versions of Buildbot and Twisted, the install went ok. If it says "no such command" or it gets an ImportError when it tries to load the libraries, then something went wrong. pydoc buildbot is another useful diagnostic tool.

Windows users will find these files in other places. You will need to make sure that python can find the libraries, and will probably find it convenient to have buildbot on your PATH.

Installation in a Virtualenv¶

If you cannot or do not wish to install the buildbot into a site-wide location like /usr or /usr/local, you can also install it into the account's home directory or any other location using a tool like virtualenv.

Running Buildbot's Tests (optional)¶

If you wish, you can run the buildbot unit test suite. First, ensure you have the mock Python module installed from PyPi. This module is not required for ordinary Buildbot operation - only to run the tests. Note that this is not the same as the Fedora mock package! You can check with

python -mmock

Then, run the tests:

PYTHONPATH=. trial buildbot.test

# or

PYTHONPATH=. trial buildslave.test

Nothing should fail, although a few might be skipped.

If any of the tests fail for reasons other than a missing mock, you should stop and investigate the cause before continuing the installation process, as it will probably be easier to track down the bug early. In most cases, the problem is incorrectly installed Python modules or a badly configured PYTHONPATH. This may be a good time to contact the Buildbot developers for help.

Creating a buildmaster¶

As you learned earlier (System Architecture), the buildmaster runs on a central host (usually one that is publicly visible, so everybody can check on the status of the project), and controls all aspects of the buildbot system

You will probably wish to create a separate user account for the buildmaster, perhaps named buildmaster. Do not run the buildmaster as root!

You need to choose a directory for the buildmaster, called the basedir. This directory will be owned by the buildmaster. It will contain configuration, the database, and status information - including logfiles. On a large buildmaster this directory will see a lot of activity, so it should be on a disk with adequate space and speed.

Once you've picked a directory, use the buildbot create-master command to create the directory and populate it with startup files:

buildbot create-master -r basedir

You will need to create a configuration file before starting the buildmaster. Most of the rest of this manual is dedicated to explaining how to do this. A sample configuration file is placed in the working directory, named master.cfg.sample, which can be copied to master.cfg and edited to suit your purposes.

(Internal details: This command creates a file named buildbot.tac that contains all the state necessary to create the buildmaster. Twisted has a tool called twistd which can use this .tac file to create and launch a buildmaster instance. twistd takes care of logging and daemonization (running the program in the background). /usr/bin/buildbot is a front end which runs twistd for you.)

Using A Database Server¶

If you want to use a database server (e.g., MySQL or Postgres) as the database backend for your Buildbot, add the --db option to the create-master invocation to specify the connection string for the database, and make sure that the same URL appears in the db_url of the db parameter in your configuration file.

Additional Requirements¶

Depending on the selected database, further Python packages will be required. Consult the SQLAlchemy dialect list for a full description. The most common choice for MySQL is

MySQL-python: http://mysql-python.sourceforge.net/

To communicate with MySQL, SQLAlchemy requires MySQL-python. Any reasonably recent version of MySQL-python should suffice.

The most common choice for Postgres is

Psycopg: http://initd.org/psycopg/

SQLAlchemy uses Psycopg to communicate with Postgres. Any reasonably recent version should suffice.

Buildmaster Options¶

This section lists options to the create-master command. You can also type buildbot create-master --help for an up-to-the-moment summary.

--force

With this option, @command{create-master} will re-use an existing master directory.

--no-logrotate

This disables internal buildslave log management mechanism. With this option buildslave does not override the default logfile name and its behaviour giving a possibility to control those with command-line options of twistd daemon.

--relocatable

This creates a "relocatable" buildbot.tac, which uses relative paths instead of absolute paths, so that the buildmaster directory can be moved about.

--config

The name of the configuration file to use. This configuration file need not reside in the buildmaster directory.

--log-size

This is the size in bytes when to rotate the Twisted log files. The default is 10MiB.

--log-count

This is the number of log rotations to keep around. You can either specify a number or @code{None} to keep all @file{twistd.log} files around. The default is 10.

--db

The database that the Buildmaster should use. Note that the same value must be added to the configuration file.

Upgrading an Existing Buildmaster¶

If you have just installed a new version of the Buildbot code, and you have buildmasters that were created using an older version, you'll need to upgrade these buildmasters before you can use them. The upgrade process adds and modifies files in the buildmaster's base directory to make it compatible with the new code.

buildbot upgrade-master basedir

This command will also scan your master.cfg file for incompatibilities (by loading it and printing any errors or deprecation warnings that occur). Each buildbot release tries to be compatible with configurations that worked cleanly (i.e. without deprecation warnings) on the previous release: any functions or classes that are to be removed will first be deprecated in a release, to give you a chance to start using the replacement.

The upgrade-master command is idempotent. It is safe to run it multiple times. After each upgrade of the buildbot code, you should use upgrade-master on all your buildmasters.

In general, Buildbot slaves and masters can be upgraded independently, although some new features will not be available, depending on the master and slave versions.

Beyond this general information, read all of the sections below that apply to versions through which you are upgrading.

Version-specific Notes¶

Upgrading a Buildmaster to Buildbot-0.7.6¶

The 0.7.6 release introduced the public_html/ directory, which contains index.html and other files served by the WebStatus and Waterfall status displays. The upgrade-master command will create these files if they do not already exist. It will not modify existing copies, but it will write a new copy in e.g. index.html.new if the new version differs from the version that already exists.

Upgrading a Buildmaster to Buildbot-0.8.0¶

Buildbot-0.8.0 introduces a database backend, which is SQLite by default. The upgrade-master command will automatically create and populate this database with the changes the buildmaster has seen. Note that, as of this release, build history is not contained in the database, and is thus not migrated.

The upgrade process renames the Changes pickle ($basedir/changes.pck) to changes.pck.old once the upgrade is complete. To reverse the upgrade, simply downgrade Buildbot and move this file back to its original name. You may also wish to delete the state database (state.sqlite).

Upgrading into a non-SQLite database¶

If you are not using sqlite, you will need to add an entry into your master.cfg to reflect the database version you are using. The upgrade process does not edit your master.cfg for you. So something like:

# for using mysql:

c['db_url'] = 'mysql://bbuser:<password>@localhost/buildbot'

Once the parameter has been added, invoke upgrade-master. This will extract the DB url from your configuration file.

buildbot upgrade-master

See Database Specification for more options to specify a database.

The upgrade process assumes that strings in your Changes pickle are encoded in UTF-8 (or plain ASCII). If this is not the case, and if there are non-UTF-8 characters in the pickle, the upgrade will fail with a suitable error message. If this occurs, you have two options. If the change history is not important to your purpose, you can simply delete changes.pck.

If you would like to keep the change history, then you will need to figure out which encoding is in use, and use contrib/fix_changes_pickle_encoding.py (Contrib Scripts) to rewrite the changes pickle into Unicode before upgrading the master. A typical invocation (with Mac-Roman encoding) might look like:

$ python $buildbot/contrib/fix_changes_pickle_encoding.py changes.pck macroman

decoding bytestrings in changes.pck using macroman

converted 11392 strings

backing up changes.pck to changes.pck.old

If your Changes pickle uses multiple encodings, you're on your own, but the script in contrib may provide a good starting point for the fix.

Upgrading a Buildmaster to Later Versions¶

Up to Buildbot version 0.8.7p1, no further steps beyond those described above are required.

Creating a buildslave¶

Typically, you will be adding a buildslave to an existing buildmaster, to provide additional architecture coverage. The buildbot administrator will give you several pieces of information necessary to connect to the buildmaster. You should also be somewhat familiar with the project being tested, so you can troubleshoot build problems locally.

The buildbot exists to make sure that the project's stated how to build it process actually works. To this end, the buildslave should run in an environment just like that of your regular developers. Typically the project build process is documented somewhere (README, INSTALL, etc), in a document that should mention all library dependencies and contain a basic set of build instructions. This document will be useful as you configure the host and account in which the buildslave runs.

Here's a good checklist for setting up a buildslave:

- Set up the account

It is recommended (although not mandatory) to set up a separate user account for the buildslave. This account is frequently named buildbot or buildslave. This serves to isolate your personal working environment from that of the slave's, and helps to minimize the security threat posed by letting possibly-unknown contributors run arbitrary code on your system. The account should have a minimum of fancy init scripts.

- Install the buildbot code

Follow the instructions given earlier (Installing the code). If you use a separate buildslave account, and you didn't install the buildbot code to a shared location, then you will need to install it with --home=~ for each account that needs it.

- Set up the host

Make sure the host can actually reach the buildmaster. Usually the buildmaster is running a status webserver on the same machine, so simply point your web browser at it and see if you can get there. Install whatever additional packages or libraries the project's INSTALL document advises. (or not: if your buildslave is supposed to make sure that building without optional libraries still works, then don't install those libraries).

Again, these libraries don't necessarily have to be installed to a site-wide shared location, but they must be available to your build process. Accomplishing this is usually very specific to the build process, so installing them to /usr or /usr/local is usually the best approach.

- Test the build process

Follow the instructions in the INSTALL document, in the buildslave's account. Perform a full CVS (or whatever) checkout, configure, make, run tests, etc. Confirm that the build works without manual fussing. If it doesn't work when you do it by hand, it will be unlikely to work when the buildbot attempts to do it in an automated fashion.

- Choose a base directory

This should be somewhere in the buildslave's account, typically named after the project which is being tested. The buildslave will not touch any file outside of this directory. Something like ~/Buildbot or ~/Buildslaves/fooproject is appropriate.

- Get the buildmaster host/port, botname, and password

When the buildbot admin configures the buildmaster to accept and use your buildslave, they will provide you with the following pieces of information:

- your buildslave's name

- the password assigned to your buildslave

- the hostname and port number of the buildmaster, i.e. buildbot.example.org:8007

- Create the buildslave

Now run the 'buildslave' command as follows:

buildslave create-slave BASEDIR MASTERHOST:PORT SLAVENAME PASSWORDThis will create the base directory and a collection of files inside, including the buildbot.tac file that contains all the information you passed to the buildbot command.

- Fill in the hostinfo files

When it first connects, the buildslave will send a few files up to the buildmaster which describe the host that it is running on. These files are presented on the web status display so that developers have more information to reproduce any test failures that are witnessed by the buildbot. There are sample files in the info subdirectory of the buildbot's base directory. You should edit these to correctly describe you and your host.

BASEDIR/info/admin should contain your name and email address. This is the buildslave admin address, and will be visible from the build status page (so you may wish to munge it a bit if address-harvesting spambots are a concern).

BASEDIR/info/host should be filled with a brief description of the host: OS, version, memory size, CPU speed, versions of relevant libraries installed, and finally the version of the buildbot code which is running the buildslave.

The optional BASEDIR/info/access_uri can specify a URI which will connect a user to the machine. Many systems accept ssh://hostname URIs for this purpose.

If you run many buildslaves, you may want to create a single ~buildslave/info file and share it among all the buildslaves with symlinks.

Buildslave Options¶

There are a handful of options you might want to use when creating the buildslave with the buildslave create-slave <options> DIR <params> command. You can type buildslave create-slave --help for a summary. To use these, just include them on the buildslave create-slave command line, like this

buildslave create-slave --umask=022 ~/buildslave buildmaster.example.org:42012 {myslavename} {mypasswd}

- --no-logrotate¶

This disables internal buildslave log management mechanism. With this option buildslave does not override the default logfile name and its behaviour giving a possibility to control those with command-line options of twistd daemon.

- --usepty¶

This is a boolean flag that tells the buildslave whether to launch child processes in a PTY or with regular pipes (the default) when the master does not specify. This option is deprecated, as this particular parameter is better specified on the master.

- --umask¶

This is a string (generally an octal representation of an integer) which will cause the buildslave process' umask value to be set shortly after initialization. The twistd daemonization utility forces the umask to 077 at startup (which means that all files created by the buildslave or its child processes will be unreadable by any user other than the buildslave account). If you want build products to be readable by other accounts, you can add --umask=022 to tell the buildslave to fix the umask after twistd clobbers it. If you want build products to be writable by other accounts too, use --umask=000, but this is likely to be a security problem.

- --keepalive¶

This is a number that indicates how frequently keepalive messages should be sent from the buildslave to the buildmaster, expressed in seconds. The default (600) causes a message to be sent to the buildmaster at least once every 10 minutes. To set this to a lower value, use e.g. --keepalive=120.

If the buildslave is behind a NAT box or stateful firewall, these messages may help to keep the connection alive: some NAT boxes tend to forget about a connection if it has not been used in a while. When this happens, the buildmaster will think that the buildslave has disappeared, and builds will time out. Meanwhile the buildslave will not realize than anything is wrong.

- --maxdelay¶

This is a number that indicates the maximum amount of time the buildslave will wait between connection attempts, expressed in seconds. The default (300) causes the buildslave to wait at most 5 minutes before trying to connect to the buildmaster again.

- --log-size¶

This is the size in bytes when to rotate the Twisted log files.

- --log-count¶

This is the number of log rotations to keep around. You can either specify a number or None to keep all twistd.log files around. The default is 10.

Other Buildslave Configuration¶

- unicode_encoding

This represents the encoding that buildbot should use when converting unicode commandline arguments into byte strings in order to pass to the operating system when spawning new processes.

The default value is what python's sys.getfilesystemencoding returns, which on Windows is 'mbcs', on Mac OSX is 'utf-8', and on Unix depends on your locale settings.

If you need a different encoding, this can be changed in your build slave's buildbot.tac file by adding a unicode_encoding argument to the BuildSlave constructor.

- allow_shutdown

allow_shutdown can be passed to the BuildSlave constructor in buildbot.tac. If set, it allows the buildslave to initiate a graceful shutdown, meaning that it will ask the master to shut down the slave when the current build, if any, is complete.

Setting allow_shutdown to file will cause the buildslave to watch shutdown.stamp in basedir for updates to its mtime. When the mtime changes, the slave will request a graceful shutdown from the master. The file does not need to exist prior to starting the slave.

Setting allow_shutdown to signal will set up a SIGHUP handler to start a graceful shutdown. When the signal is received, the slave will request a graceful shutdown from the master.

The default value is None, in which case this feature will be disabled.

Both master and slave must be at least version 0.8.3 for this feature to work.

s = BuildSlave(buildmaster_host, port, slavename, passwd, basedir,

keepalive, usepty, umask=umask, maxdelay=maxdelay,

unicode_encoding='utf-8', allow_shutdown='signal')

Upgrading an Existing Buildslave¶

If you have just installed a new version of Buildbot-slave, you may need to take some steps to upgrade it. If you are upgrading to version 0.8.2 or later, you can run

buildslave upgrade-slave /path/to/buildslave/dir

Version-specific Notes¶

Upgrading a Buildslave to Buildbot-slave-0.8.1¶

Before Buildbot version 0.8.1, the Buildbot master and slave were part of the same distribution. As of version 0.8.1, the buildslave is a separate distribution.

As of this release, you will need to install buildbot-slave to run a slave.

Any automatic startup scripts that had run buildbot start for previous versions should be changed to run buildslave start instead.

If you are running a version later than 0.8.1, then you can skip the remainder of this section: the `upgrade-slave command will take care of this. If you are upgrading directly to 0.8.1, read on.

The existing buildbot.tac for any buildslaves running older versions will need to be edited or replaced. If the loss of cached buildslave state (e.g., for Source steps in copy mode) is not problematic, the easiest solution is to simply delete the slave directory and re-run buildslave create-slave.

If deleting the slave directory is problematic, the change to buildbot.tac is simple. On line 3, replace

from buildbot.slave.bot import BuildSlave

with

from buildslave.bot import BuildSlave

After this change, the buildslave should start as usual.

Launching the daemons¶

Both the buildmaster and the buildslave run as daemon programs. To launch them, pass the working directory to the buildbot and buildslave commands, as appropriate:

# start a master

buildbot start [ BASEDIR ]

# start a slave

buildslave start [ SLAVE_BASEDIR ]

The BASEDIR is option and can be omitted if the current directory contains the buildbot configuration (the buildbot.tac file).

buildbot start

This command will start the daemon and then return, so normally it will not produce any output. To verify that the programs are indeed running, look for a pair of files named twistd.log and twistd.pid that should be created in the working directory. twistd.pid contains the process ID of the newly-spawned daemon.

When the buildslave connects to the buildmaster, new directories will start appearing in its base directory. The buildmaster tells the slave to create a directory for each Builder which will be using that slave. All build operations are performed within these directories: CVS checkouts, compiles, and tests.

Once you get everything running, you will want to arrange for the buildbot daemons to be started at boot time. One way is to use cron, by putting them in a @reboot crontab entry [1]

@reboot buildbot start [ BASEDIR ]

When you run crontab to set this up, remember to do it as the buildmaster or buildslave account! If you add this to your crontab when running as your regular account (or worse yet, root), then the daemon will run as the wrong user, quite possibly as one with more authority than you intended to provide.

It is important to remember that the environment provided to cron jobs and init scripts can be quite different that your normal runtime. There may be fewer environment variables specified, and the PATH may be shorter than usual. It is a good idea to test out this method of launching the buildslave by using a cron job with a time in the near future, with the same command, and then check twistd.log to make sure the slave actually started correctly. Common problems here are for /usr/local or ~/bin to not be on your PATH, or for PYTHONPATH to not be set correctly. Sometimes HOME is messed up too.

Some distributions may include conveniences to make starting buildbot at boot time easy. For instance, with the default buildbot package in Debian-based distributions, you may only need to modify /etc/default/buildbot (see also /etc/init.d/buildbot, which reads the configuration in /etc/default/buildbot).

Buildbot also comes with its own init scripts that provide support for controlling multi-slave and multi-master setups (mostly because they are based on the init script from the Debian package). With a little modification these scripts can be used both on Debian and RHEL-based distributions and may thus prove helpful to package maintainers who are working on buildbot (or those that haven't yet split buildbot into master and slave packages).

# install as /etc/default/buildslave

# or /etc/sysconfig/buildslave

master/contrib/init-scripts/buildslave.default

# install as /etc/default/buildmaster

# or /etc/sysconfig/buildmaster

master/contrib/init-scripts/buildmaster.default

# install as /etc/init.d/buildslave

slave/contrib/init-scripts/buildslave.init.sh

# install as /etc/init.d/buildmaster

slave/contrib/init-scripts/buildmaster.init.sh

# ... and tell sysvinit about them

chkconfig buildmaster reset

# ... or

update-rc.d buildmaster defaults

Logfiles¶

While a buildbot daemon runs, it emits text to a logfile, named twistd.log. A command like tail -f twistd.log is useful to watch the command output as it runs.

The buildmaster will announce any errors with its configuration file in the logfile, so it is a good idea to look at the log at startup time to check for any problems. Most buildmaster activities will cause lines to be added to the log.

Shutdown¶

To stop a buildmaster or buildslave manually, use:

buildbot stop [ BASEDIR ]

# or

buildslave stop [ SLAVE_BASEDIR ]

This simply looks for the twistd.pid file and kills whatever process is identified within.

At system shutdown, all processes are sent a SIGKILL. The buildmaster and buildslave will respond to this by shutting down normally.

The buildmaster will respond to a SIGHUP by re-reading its config file. Of course, this only works on Unix-like systems with signal support, and won't work on Windows. The following shortcut is available:

buildbot reconfig [ BASEDIR ]

When you update the Buildbot code to a new release, you will need to restart the buildmaster and/or buildslave before it can take advantage of the new code. You can do a buildbot stop BASEDIR and buildbot start BASEDIR in quick succession, or you can use the restart shortcut, which does both steps for you:

buildbot restart [ BASEDIR ]

Buildslaves can similarly be restarted with:

buildslave restart [ BASEDIR ]

There are certain configuration changes that are not handled cleanly by buildbot reconfig. If this occurs, buildbot restart is a more robust tool to fully switch over to the new configuration.

buildbot restart may also be used to start a stopped Buildbot instance. This behaviour is useful when writing scripts that stop, start and restart Buildbot.

A buildslave may also be gracefully shutdown from the WebStatus status plugin. This is useful to shutdown a buildslave without interrupting any current builds. The buildmaster will wait until the buildslave is finished all its current builds, and will then tell the buildslave to shutdown.

Maintenance¶

The buildmaster can be configured to send out email notifications when a slave has been offline for a while. Be sure to configure the buildmaster with a contact email address for each slave so these notifications are sent to someone who can bring it back online.

If you find you can no longer provide a buildslave to the project, please let the project admins know, so they can put out a call for a replacement.

The Buildbot records status and logs output continually, each time a build is performed. The status tends to be small, but the build logs can become quite large. Each build and log are recorded in a separate file, arranged hierarchically under the buildmaster's base directory. To prevent these files from growing without bound, you should periodically delete old build logs. A simple cron job to delete anything older than, say, two weeks should do the job. The only trick is to leave the buildbot.tac and other support files alone, for which find's -mindepth argument helps skip everything in the top directory. You can use something like the following:

@weekly cd BASEDIR && find . -mindepth 2 i-path './public_html/*' \

-prune -o -type f -mtime +14 -exec rm {} \;

@weekly cd BASEDIR && find twistd.log* -mtime +14 -exec rm {} \;

Alternatively, you can configure a maximum number of old logs to be kept using the --log-count command line option when running buildslave create-slave or buildbot create-master.

Troubleshooting¶

Here are a few hints on diagnosing common problems.

Starting the buildslave¶

Cron jobs are typically run with a minimal shell (/bin/sh, not /bin/bash), and tilde expansion is not always performed in such commands. You may want to use explicit paths, because the PATH is usually quite short and doesn't include anything set by your shell's startup scripts (.profile, .bashrc, etc). If you've installed buildbot (or other python libraries) to an unusual location, you may need to add a PYTHONPATH specification (note that python will do tilde-expansion on PYTHONPATH elements by itself). Sometimes it is safer to fully-specify everything:

@reboot PYTHONPATH=~/lib/python /usr/local/bin/buildbot \

start /usr/home/buildbot/basedir

Take the time to get the @reboot job set up. Otherwise, things will work fine for a while, but the first power outage or system reboot you have will stop the buildslave with nothing but the cries of sorrowful developers to remind you that it has gone away.

Connecting to the buildmaster¶

If the buildslave cannot connect to the buildmaster, the reason should be described in the twistd.log logfile. Some common problems are an incorrect master hostname or port number, or a mistyped bot name or password. If the buildslave loses the connection to the master, it is supposed to attempt to reconnect with an exponentially-increasing backoff. Each attempt (and the time of the next attempt) will be logged. If you get impatient, just manually stop and re-start the buildslave.

When the buildmaster is restarted, all slaves will be disconnected, and will attempt to reconnect as usual. The reconnect time will depend upon how long the buildmaster is offline (i.e. how far up the exponential backoff curve the slaves have travelled). Again, buildslave restart BASEDIR will speed up the process.

| [1] | This @reboot syntax is understood by Vixie cron, which is the flavor usually provided with Linux systems. Other unices may have a cron that doesn't understand @reboot: |

Contrib Scripts¶

While some features of Buildbot are included in the distribution, others are only available in contrib/ in the source directory. The latest versions of such scripts are available at http://github.com/buildbot/buildbot/tree/master/master/contrib.

Concepts¶

This chapter defines some of the basic concepts that the Buildbot uses. You'll need to understand how the Buildbot sees the world to configure it properly.

Source Stamps¶

Source code comes from repositories, provided by version control systems. Repositories are generally identified by URLs, e.g., git://github.com/buildbot/buildbot.git.

In these days of distributed version control systems, the same codebase may appear in multiple repositories. For example, https://github.com/mozilla/mozilla-central and http://hg.mozilla.org/mozilla-release both contain the Firefox codebase, although not exactly the same code.

Many projects are built from multiple codebases. For example, a company may build several applications based on the same core library. The "app" codebase and the "core" codebase are in separate repositories, but are compiled together and constitute a single project. Changes to either codebase should cause a rebuild of the application.

Most version control systems define some sort of revision that can be used (sometimes in combination with a branch) to uniquely specify a particular version of the source code.

To build a project, Buildbot needs to know exactly which version of each codebase it should build. It uses a source stamp to do so for each codebase; the collection of sourcestamps required for a project is called a source stamp set.

Version Control Systems¶

Buildbot supports a significant number of version control systems, so it treats them abstractly.

For purposes of deciding when to perform builds, Buildbot's change sources monitor repositories, and represent any updates to those repositories as changes. These change sources fall broadly into two categories: pollers which periodically check the repository for updates; and hooks, where the repository is configured to notify Buildbot whenever an update occurs.

This concept does not map perfectly to every version control system. For example, for CVS Buildbot must guess that version updates made to multiple files within a short time represent a single change; for DVCS's like Git, Buildbot records a change when a commit is pushed to the monitored repository, not when it is initially committed. We assume that the Changes arrive at the master in the same order in which they are committed to the repository.

When it comes time to actually perform a build, a scheduler prepares a source stamp set, as described above, based on its configuration. When the build begins, one or more source steps use the information in the source stamp set to actually check out the source code, using the normal VCS commands.

Tree Stability¶

Changes tend to arrive at a buildmaster in bursts. In many cases, these bursts of changes are meant to be taken together. For example, a developer may have pushed multiple commits to a DVCS that comprise the same new feature or bugfix. To avoid trying to build every change, Buildbot supports the notion of tree stability, by waiting for a burst of changes to finish before starting to schedule builds. This is implemented as a timer, with builds not scheduled until no changes have occurred for the duration of the timer.

How Different VC Systems Specify Sources¶

For CVS, the static specifications are repository and module. In addition to those, each build uses a timestamp (or omits the timestamp to mean the latest) and branch tag (which defaults to HEAD). These parameters collectively specify a set of sources from which a build may be performed.

Subversion, combines the repository, module, and branch into a single Subversion URL parameter. Within that scope, source checkouts can be specified by a numeric revision number (a repository-wide monotonically-increasing marker, such that each transaction that changes the repository is indexed by a different revision number), or a revision timestamp. When branches are used, the repository and module form a static baseURL, while each build has a revision number and a branch (which defaults to a statically-specified defaultBranch). The baseURL and branch are simply concatenated together to derive the svnurl to use for the checkout.

Perforce is similar. The server is specified through a P4PORT parameter. Module and branch are specified in a single depot path, and revisions are depot-wide. When branches are used, the p4base and defaultBranch are concatenated together to produce the depot path.

Bzr (which is a descendant of Arch/Bazaar, and is frequently referred to as "Bazaar") has the same sort of repository-vs-workspace model as Arch, but the repository data can either be stored inside the working directory or kept elsewhere (either on the same machine or on an entirely different machine). For the purposes of Buildbot (which never commits changes), the repository is specified with a URL and a revision number.

The most common way to obtain read-only access to a bzr tree is via HTTP, simply by making the repository visible through a web server like Apache. Bzr can also use FTP and SFTP servers, if the buildslave process has sufficient privileges to access them. Higher performance can be obtained by running a special Bazaar-specific server. None of these matter to the buildbot: the repository URL just has to match the kind of server being used. The repoURL argument provides the location of the repository.

Branches are expressed as subdirectories of the main central repository, which means that if branches are being used, the BZR step is given a baseURL and defaultBranch instead of getting the repoURL argument.

Darcs doesn't really have the notion of a single master repository. Nor does it really have branches. In Darcs, each working directory is also a repository, and there are operations to push and pull patches from one of these repositories to another. For the Buildbot's purposes, all you need to do is specify the URL of a repository that you want to build from. The build slave will then pull the latest patches from that repository and build them. Multiple branches are implemented by using multiple repositories (possibly living on the same server).

Builders which use Darcs therefore have a static repourl which specifies the location of the repository. If branches are being used, the source Step is instead configured with a baseURL and a defaultBranch, and the two strings are simply concatenated together to obtain the repository's URL. Each build then has a specific branch which replaces defaultBranch, or just uses the default one. Instead of a revision number, each build can have a context, which is a string that records all the patches that are present in a given tree (this is the output of darcs changes --context, and is considerably less concise than, e.g. Subversion's revision number, but the patch-reordering flexibility of Darcs makes it impossible to provide a shorter useful specification).

Mercurial is like Darcs, in that each branch is stored in a separate repository. The repourl, baseURL, and defaultBranch arguments are all handled the same way as with Darcs. The revision, however, is the hash identifier returned by hg identify.

Git also follows a decentralized model, and each repository can have several branches and tags. The source Step is configured with a static repourl which specifies the location of the repository. In addition, an optional branch parameter can be specified to check out code from a specific branch instead of the default master branch. The revision is specified as a SHA1 hash as returned by e.g. git rev-parse. No attempt is made to ensure that the specified revision is actually a subset of the specified branch.

Monotone is another that follows a decentralized model where each repository can have several branches and tags. The source Step is configured with static repourl and branch parameters, which specifies the location of the repository and the branch to use. The revision is specified as a SHA1 hash as returned by e.g. mtn automate select w:. No attempt is made to ensure that the specified revision is actually a subset of the specified branch.

Changes¶

Who¶

Each Change has a who attribute, which specifies which developer is responsible for the change. This is a string which comes from a namespace controlled by the VC repository. Frequently this means it is a username on the host which runs the repository, but not all VC systems require this. Each StatusNotifier will map the who attribute into something appropriate for their particular means of communication: an email address, an IRC handle, etc.

This who attribute is also parsed and stored into Buildbot's database (see User Objects). Currently, only who attributes in Changes from git repositories are translated into user objects, but in the future all incoming Changes will have their who parsed and stored.

Files¶

It also has a list of files, which are just the tree-relative filenames of any files that were added, deleted, or modified for this Change. These filenames are used by the fileIsImportant function (in the Scheduler) to decide whether it is worth triggering a new build or not, e.g. the function could use the following function to only run a build if a C file were checked in:

def has_C_files(change):

for name in change.files:

if name.endswith(".c"):

return True

return False

Certain BuildSteps can also use the list of changed files to run a more targeted series of tests, e.g. the python_twisted.Trial step can run just the unit tests that provide coverage for the modified .py files instead of running the full test suite.

Comments¶

The Change also has a comments attribute, which is a string containing any checkin comments.

Project¶

The project attribute of a change or source stamp describes the project to which it corresponds, as a short human-readable string. This is useful in cases where multiple independent projects are built on the same buildmaster. In such cases, it can be used to control which builds are scheduled for a given commit, and to limit status displays to only one project.

Repository¶

This attribute specifies the repository in which this change occurred. In the case of DVCS's, this information may be required to check out the committed source code. However, using the repository from a change has security risks: if Buildbot is configured to blindly trust this information, then it may easily be tricked into building arbitrary source code, potentially compromising the buildslaves and the integrity of subsequent builds.

Codebase¶

This attribute specifies the codebase to which this change was made. As described above, multiple repositories may contain the same codebase. A change's codebase is usually determined by the bb:cfg:codebaseGenerator configuration. By default the codebase is ''; this value is used automatically for single-codebase configurations.

Revision¶

Each Change can have a revision attribute, which describes how to get a tree with a specific state: a tree which includes this Change (and all that came before it) but none that come after it. If this information is unavailable, the revision attribute will be None. These revisions are provided by the ChangeSource.

Revisions are always strings.

- CVS

- revision is the seconds since the epoch as an integer.

- SVN

- revision is the revision number

- Darcs

- revision is a large string, the output of darcs changes --context

- Mercurial